Distance From the Outcome: Why AI Threatens Some and Supercharges Others

Whether AI amplifies or threatens you follows distance from the outcome, not your title. A practical framework for people and teams navigating AI in engineering and product work.

Published

February 4, 2026

Publisher

Adrien Brault, Sluicebox

Category

AI & Engineering

Same reality. Radically different framing. And I think the second framing is closer to the truth, but only if you understand who it applies to, and why.

The Reframe

The default narrative is about replacement. AI does what you do, therefore you're redundant. But that's not how it plays out in practice. When AI drafts most of the artifact, the human work shifts to defining the target, setting constraints, verifying correctness, and integrating into a larger system. A large chunk of execution time compresses, and what opens up is time for the work AI can't do yet: strategy, judgment calls, understanding customer problems, making architectural bets, mentoring people. The gain is often significant, though it varies by role and domain.

The reframe isn't “AI takes your job.” It's “AI compresses the low-leverage part of your role and expands the high-leverage part.”

But here's the honest caveat: this only works if you have high-leverage work to expand into. Which brings us to a more useful framework.

How Close Are You to the Outcome?

The best predictor of whether AI amplifies you or threatens you isn't your job title. It's your distance from the value outcome.

Think of it as a spectrum:

Close to the outcome

- You own the problem.

- You talk to customers.

- You decide what to build and measure if it worked.

- AI amplifies you.

Far from the outcome

- You receive specs, produce artifacts, pass them down the chain.

- Someone else decides if the result was valuable.

- AI can substitute you.

When you're close to the outcome, you're the principal. You understand the problem, you're accountable for the result, you have the context to judge quality. AI becomes a power tool in your hands because you know what to point it at and you can evaluate what it gives you back.

When you're far from the outcome, you're an intermediate agent. You execute a well-defined link in a chain. Your value comes from execution on that link. And that's precisely what AI is getting good at: executing well-defined, bounded tasks.

A developer who takes a customer problem, figures out the right approach, implements it, ships it, and measures the impact is at distance zero. AI makes them faster at every step but replaces them at none, because the judgment connecting the steps is the real value.

A developer who receives detailed specs and writes conforming code is at distance two or three. Between them and the customer outcome, there's a PM who understood the need, a tech lead who defined the approach, a QA who validates. Each intermediary link is a point where AI can step in.

This gives people a concrete career direction: reduce your distance from the outcome. Learn to talk to customers. Understand why you're building what you're building. Take ownership of results, not just tasks. That's more actionable than the vague “learn to use AI” advice.

A fair counterpoint: even being close to the outcome isn't a guarantee. AI can allow fewer “principals” to cover more surface area, meaning one owner plus AI agents might handle what used to require three owners. What remains defensible is the stuff that's hardest to automate: accountability for ambiguous tradeoffs, cross-domain judgment, and the ability to navigate situations where the right answer isn't clear-cut.

Why Startups Win This Transition

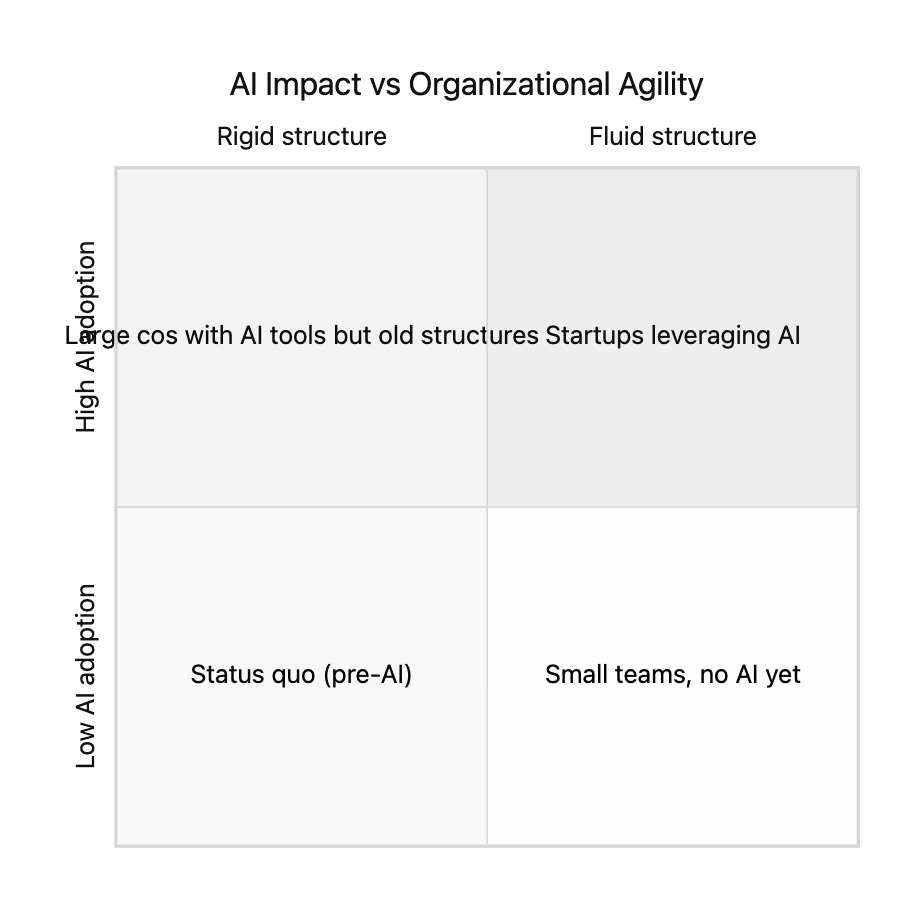

This brings us to the organizational layer, which is where things get structurally interesting.

Large organizations aren't just collections of people. They're structures crystallized around pre-AI workflows. Every handoff between teams, every specialized role, every approval chain exists because at some point, it was the optimal way to manage complexity with humans. You divide work into small pieces, specialize people, and put coordination processes between them. Classic division of labor.

The problem is that AI doesn't just speed up individual tasks. It changes the optimal unit of work. When one AI-augmented engineer/designer/product can cover what previously required separate people to spec, implement, test, and deploy, then the entire organizational structure built around that separation becomes overhead. Not just unnecessary. Actively harmful.

Here's a real example. At my company (10-person startup), coding agents recently shifted our primary bottleneck from implementation to code review. We felt it within days and started adapting our workflow. We're still experimenting, but the point is that it required us to revisit how we collaborate and where we spend attention. That kind of rapid workflow iteration is something a small team can do naturally. Now imagine that same realization hitting a 500-person engineering org with formalized review processes, cycle time dashboards, workflow tooling, and managers whose role is to oversee that process. Changing that is a six-month organizational project with political resistance at every step. Meanwhile, a startup has already iterated three times.

Large companies face a paradox: they can afford the best AI tools, but they can't easily reshape the organization to capture the full value. It's like putting a Formula 1 engine in a bus. The engine is powerful, but the vehicle wasn't designed for it.

A startup is more like a go-kart. The AI engine delivers disproportionate acceleration because the structure is light, people are close to outcomes, and there aren't ten layers between a decision and its execution.

Important nuance: small size alone isn't the advantage. A startup that replicates corporate silos in miniature (PM writes specs, dev implements, QA tests, DevOps deploys) doesn't really benefit. The advantage comes from consciously resisting over-specialization and keeping people close to outcomes.

Three Layers, One Framework

These ideas stack into a coherent model:

Individual level: AI compresses execution and frees time for judgment. The reframe is “60% more time for high-value work,” not “90% of my job is gone.”

Role level: Your vulnerability or advantage depends on your distance from the value outcome. Close to the outcome, AI amplifies. Far from it, AI substitutes.

Organizational level: Capturing AI's value requires reshaping workflows and structure, not just adopting tools. Organizations with less structural inertia adapt faster and capture more value during the transition.

The window won't stay open forever. Large organizations will eventually restructure, painfully, through layoffs and reorgs, to find their new optimal form. But historically, it's during these transition windows that startups break through. The question isn't whether AI changes the game. It's whether you and your organization can change shape fast enough to play it.

More news

Explore the latest product and sustainability updates.